Chatbot

Chatbot KPIs: The 10 Most Important Metrics for 2026 (with Benchmarks)

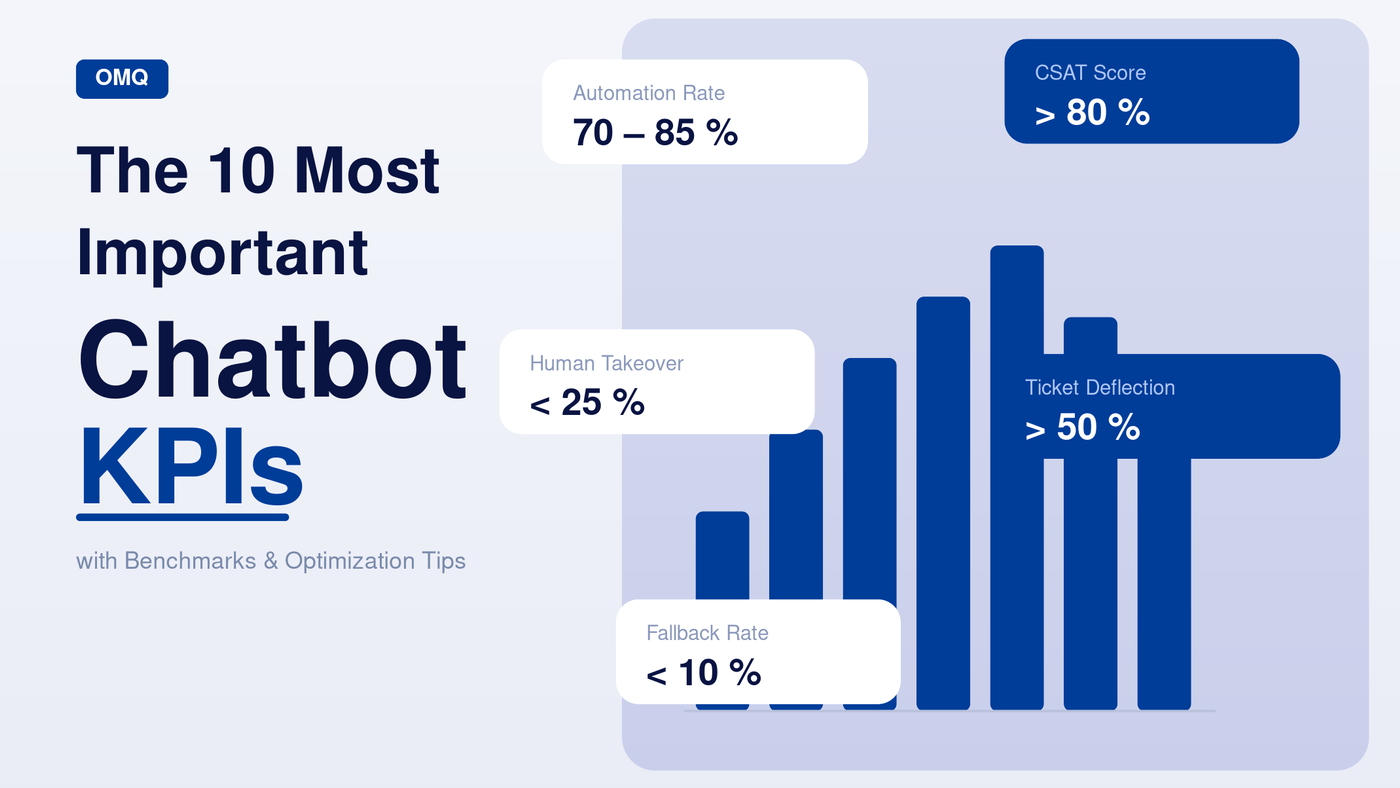

Which chatbot KPIs actually matter – and how to improve them. 10 metrics with benchmarks, formulas, and optimization tips for customer service.

An AI chatbot in customer service is only as good as its measurable impact. Without the right chatbot KPIs, you have no way of knowing whether your digital assistant is genuinely helping customers – or quietly eroding their trust.

In this article, we walk you through the 10 most important chatbot metrics, explain how to interpret them correctly, and show which benchmarks are realistic and worth striving for in 2026.

- What are chatbot KPIs and why do they matter?

- The 3 categories of chatbot metrics

- 1. Automation Rate

- 2. Resolution Rate (First Contact Resolution)

- 3. Human Takeover Rate

- 4. Customer Satisfaction (CSAT Score)

- 5. Fallback Rate

- 6. Average Handling Time (AHT)

- 7. Conversation Volume & Returning Users

- 8. Conversion Rate

- 9. Ticket Deflection Rate

- 10. Return Visitor Rate

- Bonus: ROI Calculation

- FAQ

What are chatbot KPIs and why do they matter?

Chatbot KPIs (Key Performance Indicators) are measurable figures that show how well your chatbot is fulfilling its purpose. Unlike classic web metrics such as page views or bounce rate, chatbot metrics go deeper: they measure efficiency, customer satisfaction, the degree of automation, and the direct contribution to business success.

Why does this matter? Because a chatbot without KPI monitoring is like a customer service agent without feedback: it does its job, but nobody knows whether it’s actually helping. According to Gartner, over 75% of all customer service interactions will be automated by 2026 – companies that don’t keep an eye on their metrics will fall behind.

In practical terms, chatbot metrics help you to:

- Spot weaknesses in the conversation flow early on

- Prove the chatbot’s ROI to management

- Drive targeted improvements to the knowledge base or training

- Make the reduction in support team workload tangible

The 3 categories of chatbot metrics

Before we dive into the individual KPIs, it’s worth grouping them into three overarching categories:

| Category | Metrics | Key question |

|---|---|---|

| 🤖 Efficiency | Automation rate, fallback rate, human takeover rate, AHT, resolution rate | How well is the chatbot working? |

| 😊 Customer experience | CSAT score, positive/negative ratings, return visitor rate | How do customers experience the chatbot? |

| 📈 Business impact | Conversion rate, ticket deflection rate, ROI | What does the chatbot deliver for the business? |

All three categories are equally important. A chatbot that is highly efficient but produces poor customer satisfaction is optimising the wrong side of the equation. Only by looking at all three together do you get a complete picture.

1. Automation Rate

The automation rate is the headline metric among chatbot KPIs. It shows what percentage of all incoming customer enquiries your chatbot resolves completely without human intervention.

Formula: (Enquiries fully resolved by the chatbot / Total enquiries) × 100

Benchmark: 70–85% – Well-optimised AI chatbots in customer service consistently reach these figures. Rates below 50% point to an inadequate knowledge base or insufficient training.

What drives the automation rate up? Above all, the quality of the knowledge base. The more precise and complete the answers to common customer questions stored in the system, the less often a human agent needs to step in.

Tip: A central knowledge base that automatically syncs across all channels raises the automation rate sustainably – with no need to maintain parallel content in multiple systems.

Since we started using OMQ, the number of phone enquiries and emails on many everyday topics has noticeably decreased.Andreas Lindemann, Deputy Head of Online Service Centre at alltours

2. Resolution Rate (First Contact Resolution)

While the automation rate measures how many enquiries the bot answers, the resolution rate asks: were those enquiries actually resolved? A chatbot can respond without fixing the customer’s underlying problem – and that’s exactly what needs to be avoided.

Formula: (Enquiries resolved at first contact / Total enquiries) × 100

Benchmark: > 65% – For AI systems with a strong knowledge base, figures of 75–90% are achievable.

To improve the resolution rate, regularly check which enquiries were answered but then resubmitted. These patterns reveal where answer quality is falling short.

3. Human Takeover Rate

The human takeover rate shows how often a chatbot has to hand a conversation over to a human agent. It is the direct counterpart to the automation rate – and reveals where the AI reaches its limits.

Formula: (Number of handovers to agent / Total conversations) × 100

Benchmark: < 25% – A good rule of thumb for most industries. Depending on the sector (e.g. medical or legal enquiries), a higher rate may be acceptable.

Important: A low human takeover rate is only a positive sign if the CSAT score is simultaneously high. A chatbot that never hands over but also never truly helps is optimising the wrong metric.

Tip: Systematically analyse which topics trigger a handover most frequently. These topics should be prioritised for addition to the knowledge base.

4. Customer Satisfaction (CSAT Score)

The Customer Satisfaction Score (CSAT) measures directly how satisfied users were with their chatbot conversation. It is typically collected at the end of a conversation with a simple question: “How satisfied were you with this service?” – rated on a scale of 1 to 5 or as a thumbs up/down.

Formula: (Number of positive ratings / Total ratings) × 100

Benchmark: > 80% – Good chatbot systems achieve CSAT scores of 80% and above. Scores below 60% are a clear warning sign.

Practical tip: break the CSAT score down by topic area. The chatbot may score very well on standard questions but poorly on specific product queries. That level of granularity is invaluable for targeted optimisation.

5. Fallback Rate

The fallback rate shows how often the chatbot was unable to understand an enquiry at all and responded with a generic error message. It is a direct measure of the quality of the NLP or AI model.

Formula: (Number of fallback responses / Total conversations) × 100

Benchmark: < 10% – Below 5% is excellent. Rates above 20% indicate significant gaps in training or the knowledge base.

Common causes of high fallback rates include: overly narrow question formulations in training, insufficient synonym coverage, inadequate context processing, or simply missing content for certain topic areas.

Tip: Export your fallback logs weekly and identify the top 10 topics the chatbot doesn’t understand. Simply adding these ten topics to the knowledge base can reduce the fallback rate by 30–50%.

6. Average Handling Time (AHT)

Average Handling Time (AHT) measures how long a chatbot conversation takes on average. For chatbots, this differs fundamentally from AHT in human support: a quickly closed conversation is not inherently good – it could indicate an abandoned conversation or a frustrated drop-off.

Formula: Total conversation duration / Number of conversations

Benchmark: 2–5 minutes – For standard customer service enquiries. Complex requests may take longer, as long as the resolution rate remains high.

Analysis tip: segment AHT by enquiry type. Short conversations for simple questions (opening hours, delivery status) and longer ones for complex topics (returns, technical support) is a healthy pattern.

7. Conversation Volume & Returning Users

Conversation volume shows the total number of chatbot conversations per time period. On its own, this figure says little – but as a trend, it reveals a great deal: if volume is growing, more customers are using the chatbot. If it’s declining, the entry point (widget visibility, placement) may be the issue.

Even more interesting is the share of returning users. If customers voluntarily open the chatbot a second or third time, that is a strong signal of high relevance and trust.

Tip: Analyse peak times in conversation volume. Align your knowledge base to cover high-traffic periods and proactively relieve pressure on your service team.

8. Conversion Rate

The conversion rate measures how often the chatbot triggers a desired action – for example a product enquiry, a demo booking, a purchase, or completing a form. For sales-focused or lead-generating chatbots in particular, this is one of the most important metrics.

Formula: (Number of conversions / Number of conversations) × 100

Benchmark: 5–15% – Chatbots with well-placed CTAs and clear conversation flows achieve these figures. With highly segmented audiences and personalised flows, 20%+ is possible.

For e-commerce businesses, it is worth linking the conversion rate to the average basket value to calculate the chatbot’s direct revenue contribution.

9. Ticket Deflection Rate

The ticket deflection rate shows how many support tickets were prevented by the chatbot. Unlike the automation rate – which looks at all chatbot conversations – this metric focuses specifically on reducing the load on the service team.

Formula: (Enquiries resolved without a ticket / Enquiries that would otherwise have created a ticket) × 100

Benchmark: > 50% – Leading self-service systems deflect 50–80% of all potential tickets. Even a 10-percentage-point improvement can make the difference between an overloaded and a normally functioning service team of medium size.

The ticket deflection rate has a direct ROI connection: every deflected ticket saves processing time in the service department – typically 5–15 minutes. With 1,000 deflected tickets per month, that amounts to up to 250 hours of saved agent time.

When it comes to answer quality, OMQ is unbeatable. No other system delivers results as precise and reliable as OMQ – especially for complex enquiries.Jens Roßberg, Head of Support at MAGIX

10. Return Visitor Rate

The return visitor rate shows how many users voluntarily use the chatbot a second time or more. It is one of the strongest indicators of genuine usefulness – because no customer willingly returns to a system that failed to help them.

Benchmark: > 30% – A return visitor rate above 30% shows that your chatbot is perceived as a reliable tool. Self-service portals with particularly useful chatbots achieve figures of 40–60%.

Alongside this, it is worth tracking conversation depth: how many different topics does a user raise on average in a single session? High conversation depth combined with a high CSAT score signals genuine trust in the chatbot.

All 10 chatbot KPIs at a glance

| KPI | Formula | Benchmark | Category |

|---|---|---|---|

| Automation rate | Bot responses / Total × 100 | 70–85% | Efficiency |

| Resolution rate (FCR) | Resolved enquiries / Total × 100 | > 65% | Efficiency |

| Human takeover rate | Handovers / Total × 100 | < 25% | Efficiency |

| CSAT score | Positive ratings / Total × 100 | > 80% | Customer experience |

| Fallback rate | Fallbacks / Total × 100 | < 10% | Efficiency |

| Avg. handling time (AHT) | Total duration / Conversations | 2–5 min. | Efficiency |

| Conversation volume | Absolute (trend) | Growing | Customer experience |

| Conversion rate | Conversions / Conversations × 100 | 5–15% | Business impact |

| Ticket deflection rate | Deflected tickets / Total × 100 | > 50% | Business impact |

| Return visitor rate | Returning users / Total × 100 | > 30% | Customer experience |

Bonus: How to calculate your chatbot’s ROI

All KPIs together make a well-founded ROI calculation possible:

ROI = (Saved ticket volume × Cost per ticket) + Revenue uplift from conversions − Chatbot operating costs

Example calculation: A mid-sized e-commerce business handles 5,000 support tickets per month. The chatbot deflects 60% (= 3,000 tickets). If each ticket costs an average of €8 in agent time, the chatbot saves €24,000 per month. With typical licence costs of €450–700, this translates to an ROI of over 3,000% in the first year.

Tip: Always link the ticket deflection rate to the CSAT score. Only when both figures are high does your chatbot deliver genuine value – deflection should never come at the expense of service quality.

Improving chatbot KPIs with OMQ

OMQ is a cross-channel AI customer service platform designed precisely around the KPIs described above. At its core is a centrally managed, AI-powered knowledge base that automatically synchronises across all channels:

| Product | Impact on KPIs |

|---|---|

| OMQ Chatbot | Boosts automation rate and ticket deflection |

| OMQ Help | Prevents tickets before they arise – improves FCR |

| OMQ Assist | Supports agents in real time – improves AHT and CSAT |

| OMQ Reply | Automates email enquiries – reduces human takeover rate |

| OMQ Contact | Answers enquiries directly in the form – boosts conversion rate |

All products draw on the same knowledge base – content is maintained once and takes effect immediately across all channels. That means every improvement to the knowledge base simultaneously lifts all relevant KPIs.

Conclusion: Chatbot KPIs as the foundation for continuous improvement

Chatbot KPIs are not a one-off exercise – they are the foundation for continuous optimisation. Knowing your metrics means you can intervene precisely: add better answers, close knowledge gaps, optimise conversation flows, and demonstrate the AI chatbot’s ROI to management.

The key takeaways in brief: the automation rate and ticket deflection rate show the direct relief your chatbot provides to your team. CSAT and resolution rate reflect the quality of the customer experience. Human takeover rate and fallback rate reveal where the chatbot still has room to grow. Conversion rate and ROI connect the chatbot directly to your business results. And the return visitor rate is the underestimated signal for sustainable, long-term value.

The difference between an average and an excellent chatbot lies not in the technology alone – it lies in measuring these metrics regularly, understanding them, and acting on them deliberately.