News

ChatGPT Chatbot in Customer Service - The Revolution of Customer Communication?

ChatGPT from OpenAI is revolutionizing the way we communicate with AI bots. We'll show you if this new chatbot technology can also be used in customer service and how online communication is improved with these modern chatbot systems.

Introduction - What is ChatGPT?

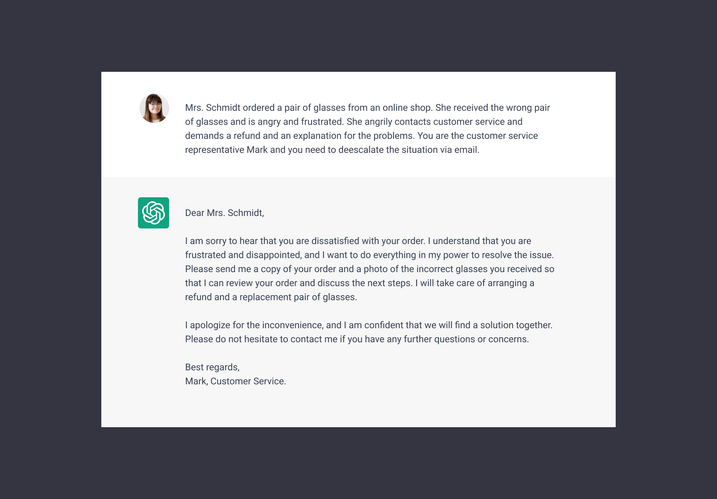

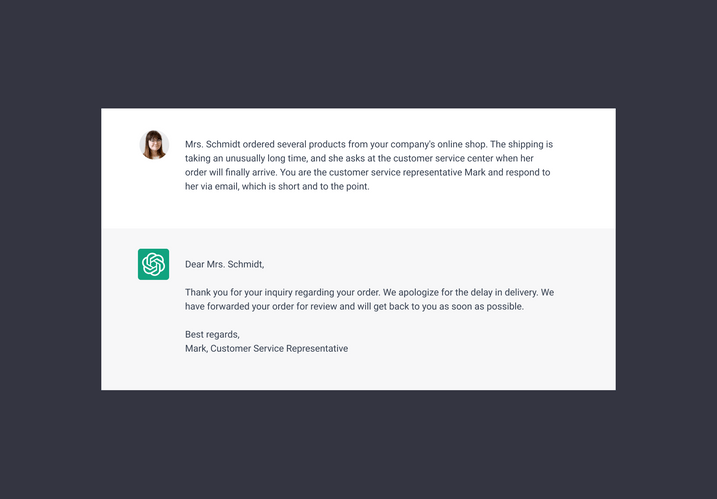

ChatGPT is the talk of the town - and for good reason: the new chatbot model is particularly good at talking to people. The big leap from previous chatbots is that you can have a normal conversation, with ChatGPT providing appropriate responses in seconds. For example, you can assign the model the role of a customer service agent and then have it answer customer queries:

ChatGPT independently formulates an email response for the customer service.

As a master of communication, it makes sense to use this technology in customer service, but can ChatGPT models even be used there? We will get to the bottom of this question in the following article.

- How does the ChatGPT chatbot technology work?

- Difference of ChatGPT to current chatbot models

- ChatGPT example for customer service usage

- Is this technology suitable for customer service?

- Problems and solutions: How to optimise the ChatGPT model for customer service

- ChatGPT-level chatbot in customer service

- Coming Soon: The OMQ ChatGPT-level chatbot as a beta version

How does the ChatGPT chatbot technology work?

ChatGPT is a specialised form of OpenAI’s GPT (Generative Pre-trained Transformer) language model, specifically designed to respond naturally to questions and instructions. GPT is based on machine learning, which is used to create artificial neural networks that resemble the human nervous system. ChatGPT is already well trained and has thus built a multi-layered network.

ChatGPT can answer in natural language like human support agents.

Generative Pretrained Transformer

Generative Pre-trained Transformer 3 (GPT-3) is an autoregressive language model that uses deep learning to produce human-like text. Given an initial text as prompt, it will produce text that continues the prompt.

Using texts with about 500 billion words, the software learned how language works, what the differences are between written and spoken language and in which form to answer which questions.

ChatGPT start page from OpenAI

Difference of ChatGPT to current chatbot models

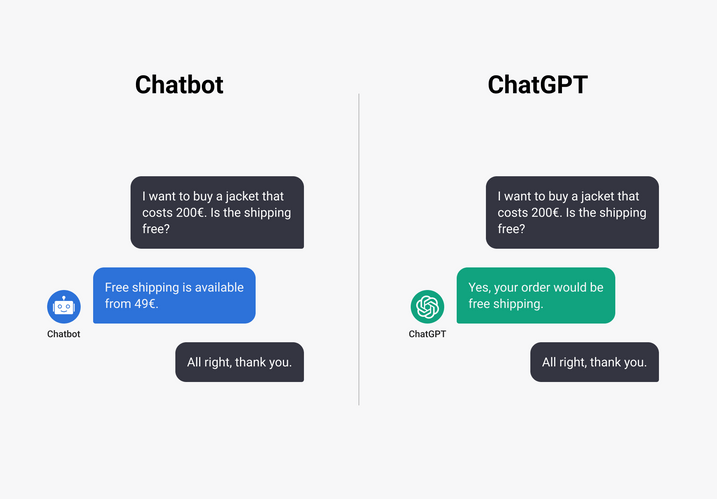

Traditional chatbots know how to answer some questions, but they cannot answer specifically. So, in a normal chatbot communication, there are often situations where the bot asks: “What do you mean?” or where it suggests different answer options from which the customer has to choose the right one.

ChatGPT example for customer service usage

The ChatGPT model, on the other hand, understand and process the messages immediately. If a customer now asks: “I want to buy a jacket that costs 500€. Is the shipping free?“, ChatGPT internally reads the entries in the database, thereby knowing that the company offers free shipping from 49€ and also knows that 500€ is more than 49€. These two pieces of information are linked and directly given out as an answer, whereas a current chatbot would only answer: “Free shipping is available from 49€.”

Difference in answering customer queries between current chatbots and ChatGPT.

Is this technology suitable for customer service?

To be used in customer service, the technology must first be adapted to the needs of customer service. For example, ChatGPT perceives some information as correct that may not be. One example is that ChatGPT thinks that websites always have a “forgot password” button at login.

The problem is that the software assumes an answer that may apply to many companies, but maybe not to a specific case. Vague and general information is thus confidently passed on from the bot to the user.

So at the moment it is not yet possible to apply ChatGPT models in support, but with a little coaching the various vulnerabilities can be modified to then answer customer queries after all.

Problems and solutions: How to optimise the ChatGPT model for customer service

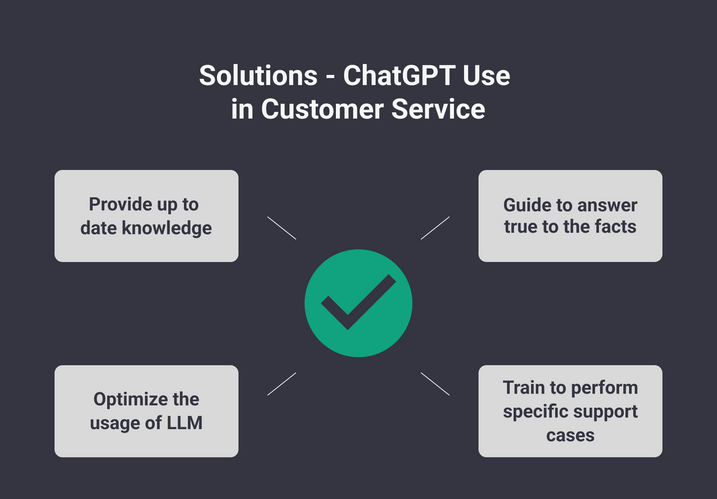

The following “weak points” of the ChatGPT model are the reason why it is currently not yet possible to use the system in support. The optimisation of these points ensures that an ideal AI agent is created that answers customer queries directly in the chat.

With these solutions, the ChatGPT model can be optimized for customer service.

ChatGPT was filled with data until 2021. This means that the knowledge is limited to information until 2021 (e.g.: Donald Trump is still president). Additional training or knowledge sharing is needed to fix this vulnerability.

Solution: Provide current knowledge

The model is provided with current knowledge, which it then learns and uses as its main data source.

The main problem with ChatGPT is that the bot is very good at looking authentic, however, the data it outputs is not always correct or real data.

Phone numbers, for example, are simply taken from standards (US number), which is then sent to the customer. It is not a real number, but is sent as: “What a real number might look like”.

AI Alignment Problem

In artificial intelligence (AI), the “alignment problem” refers to the challenges caused by the fact that machines simply do not have the same values as us. In fact, when it comes to values, then at a fundamental level, machines don’t really get much more sophisticated than understanding that 1 is different from 0.

Solution: Guide to truthful answers

The model must be made to answer according to the facts and not just sound good. This is made possible with alignment constraints that make the system work as if they were service agents. This can be thought of as guiding a human agent to use the knowledge base to provide answers and determine its attitude towards customers.

Running Large Language Models (LLM) like ChatGPT requires special hardware. When a model the size of ChatGPT runs on a normal PC CPU, it processes one word in about 10 seconds. So the process is very computationally and time intensive. Even with special hardwares like the GPU (supercomputer of the modern AI age) an inference call (= request for a response like in chat) can take some time.

Since the end of 2022, however, it has been possible to create LLM-based applications within a reasonable time and cost framework. Nevertheless, these processes must be constantly developed further in order to be able to work efficiently and in an optimized manner.

Solution: Optimising the use of LLMs

The use of the Large Language Model should be optimised so that simple tasks (e.g. finding relevant documents in the database) are taken over by simpler models, while LLMs are there to read and understand the clients’ messages and then generate the response to the clients.

ChatGPT models and similar models mostly only output information, but cannot act. They are very smart in communication, but ultimately also only react to human input. They lack the execution of actions.

The model needs to be trained and adapted for actions needed to perform specific support requests, and then be able to make data changes or damage reports on its own, for example.

Solution: Train for specific support tasks

Special procedures are required for these support cases. For example, forms can be used in which customers can enter their data directly in the chat and which can then be used directly (e.g. for name changes, damage reports, address changes, etc.).

ChatGPT-level chatbot in customer service

As mentioned earlier, ChatGPT technology needs to be adapted to customer service before it can be used to answer customer queries. ChatGPT takes chatbots to a new level because the model has so much knowledge and is so natural in its interaction. Until then, however, the model can be an inspiration and support for service agents, for example to find good wording for messages.

If the above-mentioned weaknesses of the system are improved with the solutions provided, it is theoretically possible to develop a ChatGPT-level customer support chatbot that answers customer queries just as intelligently and in natural language, but is fully tuned to a knowledge base.

Coming Soon: The OMQ ChatGPT-level chatbot as a beta version

We are currently working on optimising the GPT-level model to make it suitable for customer service. We will show you what this chatbot will finally look like and what it means for customer support in the coming weeks.

You can be curious, because we also want to share with you in a multi-part series of articles how our beta version works and how we improve the functions of ChatGPT for the customer service.

Sign up for our newsletter here to get all the news and updates about our OMQ ChatGPT-level chatbot.

Also check out our blog if you want to know how Artificial Intelligence works in customer service and how chatbots are currently answering customer queries.

Any questions?

We are currently working on such a version and are excited to share it with you soon. If you have any further questions about it, feel free to contact us or request a demo without obligation. We are looking forward to seeing you! :)